Hermes Gemma 4 is how you run a proper AI agent for free, forever, on your own machine — and most people have no idea this is even possible yet.

Pull up a chair.

I want to show you something that changed the maths on my AI business overnight.

Gemma 4 dropped as Google's latest open-source lightweight model.

I plugged it into Hermes.

And now I've got 20 sub-agents running locally, for free, with a 256K context window.

No API bills.

No rate limits.

No "please upgrade to Pro" popups.

Let me walk you through the exact setup.

The 60-Second Pitch For Hermes Gemma 4

If you only read one section of this article, read this.

Gemma 4 — Google's latest open-source lightweight efficient model. Runs locally. Very small footprint. Surprisingly capable.

Hermes — my favourite AI agent. Orchestrates tools, spawns sub-agents, keeps context clean.

Hermes Gemma 4 — the two together, wired up through Ollama.

The result: a completely free AI agent that runs on your computer.

And here's the kicker — the bigger Gemma 4 variants pack a 256K context window, which is actually bigger than MiniMax M2.7 and a lot of other frontier cloud models.

Local. Free. Bigger context than cloud.

That's not supposed to be possible.

It is.

What You Need First

Before anything else, two prereqs:

1. Hermes installed. If you haven't got Hermes on your machine, check out my Ollama + Hermes walkthrough first.

2. A decent machine. For the smaller Gemma 4 variants, any modern laptop. For the 256K-context 18GB/20GB variants, you'll want 16GB+ RAM and a solid GPU.

That's it.

No Docker.

No config files.

No Python venvs.

Just Hermes + Ollama + Gemma 4.

Step-By-Step Setup

Step 1 — Get Ollama

Ollama is the local runtime that hosts Gemma 4 on your machine.

Head to ollama.com.

Grab the install command.

Paste it into your terminal.

Run it.

Ollama installs as a background service — you don't have to think about it after this.

Verify it's alive with ollama list.

Step 2 — Pull Gemma 4

On ollama.com, navigate to Models.

Click Gemma 4.

Pick your variant based on your hardware:

- ~128K context variants → smaller, runs on anything

- 256K context variants (18GB, 20GB) → bigger, needs more RAM

Ollama shows you the terminal command to install whichever you pick.

Run it.

Wait for the download.

Gemma 4 is now on your machine.

Step 3 — Wire Hermes To Gemma 4

Open your terminal.

Start a new Hermes chat.

Run:

hermes model

You'll see a list of model endpoints.

Scroll to Custom Endpoint. Select it.

Hermes asks for a URL.

Paste the Ollama local URL: http://localhost:11434.

Make sure Ollama is actually running in the background before you do this step. Otherwise Hermes can't reach it.

Hermes asks for an API key.

Two options:

- Leave it blank

- Type "Ollama"

Both work. I type "Ollama" by habit.

Hermes pings Ollama, pulls the list of models you've got installed, and shows them to you.

Gemma 4 latest will be in the list.

Select it.

Leave the next prompt blank.

Run Hermes.

That's it.

You're now running Hermes Gemma 4 locally.

🔥 Skip the trial-and-error. Inside the AI Profit Boardroom, I've got a full Hermes automation section with step-by-step video tutorials — the exact Ollama setup, the custom endpoint flow, sub-agent workflows, and yesterday's walkthrough of the new Hermes 0.7 update. Weekly coaching calls where I share my screen and answer your Qs live. 3,000+ members already inside running this stuff daily. → Get the full training here

Why Hermes Gemma 4 Matters For Sub-Agents

Here's the thing most people miss.

Agent workflows aren't about one big model thinking really hard.

They're about many smaller models running in parallel.

Summarising.

Classifying.

Filling in templates.

Pulling data.

Sending notifications.

None of that needs frontier intelligence.

It needs cheap, fast, reliable.

Hermes Gemma 4 is exactly that.

Here's how I actually run it:

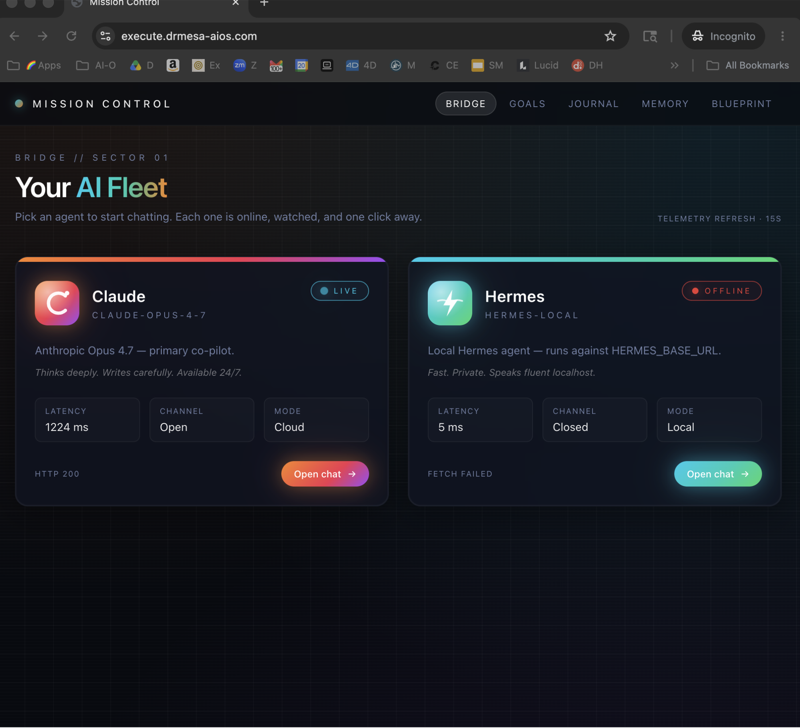

- Orchestrator Hermes session — running Claude Opus 4.7 or MiniMax M2.7 for the hard reasoning

- 10-20 sub-agent Hermes sessions underneath — all running Gemma 4 locally, grinding through bulk work

Zero cost on the sub-agents.

The orchestrator is the only thing touching a paid API.

This is how you scale AI work without a $2,000 OpenAI bill at the end of the month.

I talked about the multi-agent idea more in Hermes agent mission control if you want the deeper rationale.

The Context Window Situation

Let's talk about the 256K context window properly.

The bigger Gemma 4 variants — the 18GB and 20GB ones — run with 256K context.

That's bigger than MiniMax M2.7.

Bigger than most frontier cloud models.

Free. Local.

What does that actually unlock?

Whole codebase reasoning. Drop a mid-sized repo into the context. Gemma 4 can reason across all of it at once.

Massive document synthesis. Feed it hours of transcripts, a whole book, a year of emails. It handles it.

Long-running agent memory. Sub-agents that track context across dozens of turns without losing the plot.

You couldn't do any of that on a laptop two years ago.

Now you can.

For free.

Hermes + MiniMax 2.7 + Gemma 4 — The Full Agentic Stack

Same custom endpoint flow in Hermes works for MiniMax M2.7 cloud too.

Run hermes model.

Custom endpoint.

Type "2" for MiniMax M2.7 cloud.

Leave blank.

Run.

MiniMax 2.7 is agentic, self-improving, designed to run tools from the ground up.

You can even run OpenClaw with MiniMax 2.7 through Ollama — it's a beautiful combo for automation work.

If you haven't seen the Hermes vs OpenClaw breakdown yet, that explains which one to pick for which job.

The real power move is running all three — Hermes for orchestration, Gemma 4 for free sub-agents, MiniMax 2.7 when you need agentic cloud intelligence.

Local vs Cloud — When To Pick Which

Straight-up framework I use:

| Job | Best Pick |

|---|---|

| Hardest reasoning task of the day | Cloud (Claude Opus 4.7 or MiniMax M2.7) |

| 20 sub-agents running overnight | Hermes Gemma 4 locally |

| Client data that can't leave your machine | Hermes Gemma 4 locally |

| Massive 200K+ context job | Hermes Gemma 4 (big variant) or cloud |

| Tool calling bulk work | Either — Gemma 4 is plenty |

| Deep research synthesis | Cloud |

Cloud models are better.

Local models are free.

Use both.

Common Problems And Fixes

A few things I've watched people trip over:

"Hermes can't find any models."

Ollama isn't running. Open a second terminal and check with ollama list. If it errors, restart Ollama.

"Gemma 4 isn't in the model list." You didn't install it properly. Go back to ollama.com/Models/Gemma 4 and re-run the install command.

"My machine is crawling." You probably picked a variant too big for your RAM. Drop to a smaller Gemma 4 variant.

"Outputs are a bit dumb." Local models need slightly more prompt structure than cloud. Be more specific. Give examples. It picks up fast.

FAQ — Hermes Gemma 4

What is Hermes Gemma 4?

It's the combination of the Hermes AI agent running on top of Google's Gemma 4 open-source model via Ollama — a fully local, free-to-run AI agent stack.

What's the setup command flow for Hermes Gemma 4?

hermes model → select custom endpoint → paste Ollama URL → API key = "Ollama" (or blank) → select Gemma 4 latest → leave blank → run Hermes.

Is Gemma 4 actually free?

Yes. It's Google's open-source lightweight model. No API fees, no subscription, no usage tracking. Your only cost is electricity.

What's Gemma 4's context window?

Smaller variants run around 128K context. The bigger 18GB and 20GB variants run at 256K — larger than MiniMax M2.7 and many frontier cloud models.

Can I run sub-agents on Hermes Gemma 4?

Yes — this is arguably the best use case. Run your orchestrator on a cloud model and fire sub-agents at local Gemma 4 for free bulk work.

Do I need Hermes to run Gemma 4?

No, you can run Gemma 4 directly through Ollama without Hermes. But Hermes turns it into an agent that can call tools, manage context, and work autonomously — which is the whole point.

Related Reading

- Ollama + Hermes — the foundation setup

- Hermes agent mission control — multi-agent orchestration

- Hermes vs OpenClaw — picking between agents for the job

Final Word

Hermes Gemma 4 is the best "free AI agent" combo I've ever tested.

256K context window.

Free forever.

Sets up in 5 minutes.

Works beautifully for sub-agents.

No excuse not to try it tonight.

🚀 Want the playbook, not just the tutorial? Inside the AI Profit Boardroom, I've got a 2-hour course on using Hermes to save time and grow a real business. Plus a 6-hour OpenClaw course, daily SOPs, weekly coaching calls, and 145 pages of member wins. Mission: stop people falling behind on AI. → Join the Boardroom

Video notes + links to the tools 👉 https://www.skool.com/ai-profit-lab-7462/about

Learn how I make these videos 👉 https://aiprofitboardroom.com/

Get a FREE AI Course + Community + 1,000 AI Agents 👉 https://www.skool.com/ai-seo-with-julian-goldie-1553/about

Run Hermes Gemma 4 today and stop paying for things you can get for free.