OpenClaw Kimi K2.6 just became the first free agentic AI stack I actually trust on real work — and I've been testing it daily for a week.

Here's what nobody's telling you.

Most "free AI agent" tutorials are either outdated or the model underneath can barely call a tool.

Kimi K2.6 is the exception.

It's open-source.

It's agentic by design.

And it pairs with OpenClaw in literally 5 minutes.

Let me walk you through the whole setup, the real test results, and the pricing thing that catches everyone out.

What OpenClaw Kimi K2.6 Actually Is

Two things glued together.

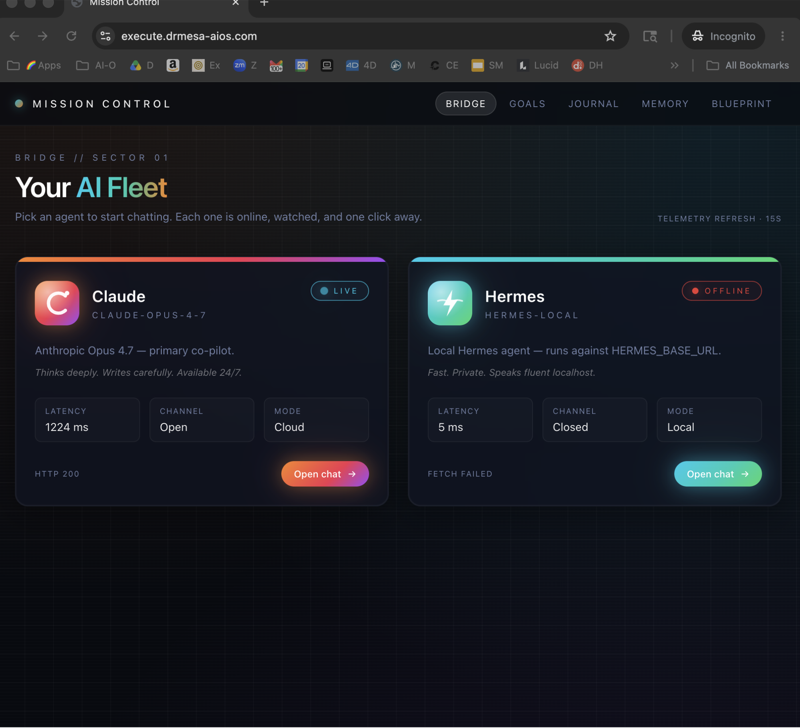

OpenClaw — a shell that lets you run AI agents with tools, web access, and file work.

Kimi K2.6 — an open-source agentic model from the Kimi team.

Agentic means it's trained to do things, not just chat about them.

Kimi's own blog post specifically named OpenClaw and Hermes as the two tools it plays nicest with.

That's the headline.

The rest is execution.

The Setup (5 Minutes, Honest)

Here's the exact sequence.

No guesswork.

One-Click Setup Command

Grab the one-click setup from Ollama.

Paste it into terminal.

This installs Ollama or updates you to the latest version.

Always update.

Old versions don't have Kimi K2.6 cloud showing up in the Models list.

Find Kimi K2.6 in Models

Open Ollama.

Go to Models.

Scroll to Kimi K2.6.

Open-source.

Agentic.

Ready to run.

Pull or Run With OpenClaw

You can either pull the model directly into Ollama or run it straight with OpenClaw.

I go with the second option because it's one less step.

Start the Gateway

Running the model fires up the gateway.

Open OpenClaw.

Check the gateway's running.

That's it.

If you've followed my Ollama + Hermes guide before, this gateway step will be familiar.

Same pattern, different shell.

Real Tests I Ran

I didn't want to write a theoretical review.

So I used OpenClaw Kimi K2.6 on live tasks.

Test 1: "Research the web for the latest AI news today."

Response back in seconds.

Three news releases, summarised cleanly.

Straight to the point.

No robotic "As an AI language model…"

Just the news.

Test 2: "Check what happened today in AI automation."

Grabbed the web tool.

Pulled fresh info.

Used tools properly.

Reported back with current data.

This is the stuff a non-agentic model struggles with.

Kimi K2.6 handled it like it was built for it — because it was.

Test 3: Multi-step follow-ups

I chained follow-up questions.

Kimi held context, kept tool usage sharp, didn't forget what task it was on.

That's the agentic edge.

The Pricing Trap Most People Miss

Here's the thing nobody explains clearly.

Kimi K2.6 is a cloud model.

Free within Ollama's token limits.

Heavy daily usage will hit the cap unless you're on an upgraded Ollama plan.

If you're planning to use this thing all day every day, I'd lean on Gemma 4 for the brute-force work.

Gemma 4 is a local model — unlimited usage on your own machine.

You grab Gemma 4 from ollama.com under Models > Gemma 4.

My split:

- Kimi K2.6 for agent tasks (tool calls, research, automation).

- Gemma 4 for bulk tasks (writing, processing, anything high-volume).

Both through Ollama.

Both free-ish.

Pick the right tool for the job.

🔥 Want my full stack walked through on video? Inside the AI Profit Boardroom I've got the complete 6-hour OpenClaw course and the 2-hour Hermes course. Every agent stack I run — Kimi K2.6, Gemma 4, the lot — set up on screen. Plus weekly coaching calls where we fix your setup live. 3,000+ members shipping agents right now. → Join the Boardroom here

The Hermes Option

Kimi's team blessed Hermes as well.

Hermes got Ollama support baked in recently.

To run Kimi with Hermes:

- Install Hermes with Ollama support.

- Type

launch Hermesin terminal. - On setup, select Kimi.

Done.

If you want the head-to-head, I broke down Hermes vs OpenClaw properly — which one fits which workflow, what each shell is actually good at.

Atomic Chat: The Non-Terminal Play

Real talk.

Most people aren't technical enough to enjoy terminals.

That's not an insult — it's reality.

Terminals make everything harder for 80% of the audience.

Atomic Chat solves this.

Same setup.

Same tools.

Proper UI with a model dropdown.

Here's the click-path:

- Go to Atomic Chat.

- Navigate to AI Models > API Keys.

- Find Kimi K2.6 cloud.

- In a separate tab, grab your API key from Ollama Settings > Keys.

- Back in Atomic Chat, pick Ollama in the local model section.

- Select Kimi K2.6 cloud.

- Hit Save.

- Go to Dashboard > Chat.

- Paste the Ollama API key.

- Save again.

- Select Kimi K2.6 cloud Ollama from the dropdown.

- Start sending messages.

Zero terminal.

Same power.

And the dropdown means you can switch between Kimi K2.6 and other models mid-conversation.

I genuinely use Atomic Chat more than I use OpenClaw when I'm not automating things.

Works With Other Shells Too

Kimi K2.6 runs with:

- OpenCode

- Codex

- Claude Code

But setup is easiest with OpenClaw or Hermes — Kimi's own team said so.

If you already run OpenClaw + Byterover style workflows, adding Kimi is just a model swap in the config — nothing else changes.

OpenClaw Kimi K2.6 Verdict After A Week

OpenClaw Kimi K2.6 is the new default in my stack.

Free (within reason).

Fast.

Agentic by design.

Plays well with every shell worth using.

And the Atomic Chat route means non-technical folks can use it too.

Only real gotcha: cloud token limits.

If you're hammering it all day, pair it with Gemma 4 local for the heavy stuff.

FAQ: OpenClaw Kimi K2.6

Is OpenClaw Kimi K2.6 good for beginners?

Yes — if you use Atomic Chat instead of terminal, the whole thing is point-and-click.

You grab an API key from Ollama, paste it into Atomic Chat, pick Kimi K2.6 cloud, and start chatting.

Genuinely beginner-friendly.

How is Kimi K2.6 different from ChatGPT?

Kimi K2.6 is open-source and agentic-first.

ChatGPT is closed and conversation-first.

Kimi is designed for tool use and multi-step automation — that's where it shines.

What's the one-click setup command?

Grab the latest one from Ollama's install page.

Paste it into terminal, hit enter, and Ollama installs or updates.

Then you pick Kimi K2.6 in Models and run it with OpenClaw.

Can OpenClaw Kimi K2.6 do web research?

Yes — when the web tool is enabled, Kimi K2.6 pulls live info.

I tested it with "latest AI news today" and it returned three fresh releases.

Why did Kimi's team pick OpenClaw and Hermes specifically?

Because those two shells are built around agentic workflows — tool calls, gateway routing, multi-step reasoning.

Kimi K2.6 is trained for those workflows, so the pairing makes sense.

Does Kimi K2.6 work offline?

No — it's a cloud model.

For fully offline, use Gemma 4 local through Ollama.

Related Reading

- Hermes vs OpenClaw — pick the right shell for Kimi K2.6.

- Ollama + Hermes guide — local-first agent stack.

- OpenClaw 4.20 update — latest shell features worth using.

🎯 Ready to run agents that actually work? The AI Profit Boardroom gives you the full 6-hour OpenClaw course, the 2-hour Hermes course, daily classroom tutorials, weekly coaching calls, and a map to find AI folks in your city. 3,000+ members. This is where I'd send my younger self if I was starting over. → Get access here

Video notes + links to the tools 👉 https://www.skool.com/ai-profit-lab-7462/about

Learn how I make these videos 👉 https://aiprofitboardroom.com/

Get a FREE AI Course + Community + 1,000 AI Agents 👉 https://www.skool.com/ai-seo-with-julian-goldie-1553/about

That's my honest take — OpenClaw Kimi K2.6 is the free agent stack I'd run if I had to start over today.