The reason this chatgpt image 2 tutorial matters for anyone doing AI automation is simple.

For the first time, you can plug a world-class image model directly into your agent stack.

No more stitching together Midjourney via unofficial wrappers.

No more sketchy third-party APIs.

OpenAI just shipped gpt-image-2 as a first-class API model and it's destroying every competitor on the ELO leaderboard.

In this tutorial I'm going to cover the automation angle hard — API setup, Hermes and OpenClaw integration, the prompt engine, and how to wire the whole pipeline so your agents can generate on-brand images on autopilot.

The ELO Scoreboard (Why Automation Teams Care)

Quick numbers:

- ChatGPT Image 2 (gpt-image-2): 1512

- Gemini Nano Banana 2: 1271

- Grok Imagine: 1170

- GPT Image 1.5: 1241

For automation, the model you pick matters because every shipped image compounds quality across your brand.

ChatGPT Image 2 is now the default.

What It Actually Does

Before we get into automation plumbing, here's what the model can produce:

- Hyperrealistic posters (with legible taglines)

- Multi-panel comics with dialogue

- Diagrams for docs and decks

- Newspaper mockups with real columns

- Pixel art

- Receipts (great for fintech or e-commerce automation)

- Logos

- Fantasy and real-world maps

- Product mockups

- UGC-style iPhone shots

- UI mockups (works beautifully next to Codex 2.0)

And it supports editing existing images — you can paint-mask an area and prompt just that region.

Where ChatGPT Image 2 Lives

- ChatGPT on web, iOS, Android

- Free, Plus, Pro, Business tiers

- API at platform.openai.com (

gpt-image-2) - Thinking mode on Plus/Pro/Business (reasoning-powered)

For automation, the API is where the magic happens.

Thinking Mode — The Reasoning Layer

Here's the headline feature.

ChatGPT Image 2 doesn't just generate pixels from your prompt.

It:

- Parses the prompt

- Plans the composition

- Fills in details you forgot to specify

- Internally sketches the image

- Then renders the final

This is why the ELO is so far ahead.

For automation, this means your prompts can be lazier and still get great results.

Huge for pipelines where humans aren't hand-crafting each prompt.

🔥 Build the full automation stack inside the Boardroom Inside the AI Profit Boardroom, I've got a full AI automation section with tutorials on wiring ChatGPT Image 2 into Hermes, OpenClaw, and custom Python pipelines. Weekly coaching where I share my screen and debug your automations live. 3,000+ members inside. → Join the Boardroom automation section

ChatGPT Image 2 Tutorial — API Setup In 5 Minutes

Step by step:

- Go to platform.openai.com

- Click API keys

- Generate a new key

- Set

OPENAI_API_KEYin your env - Call model

gpt-image-2

That's the entire auth flow.

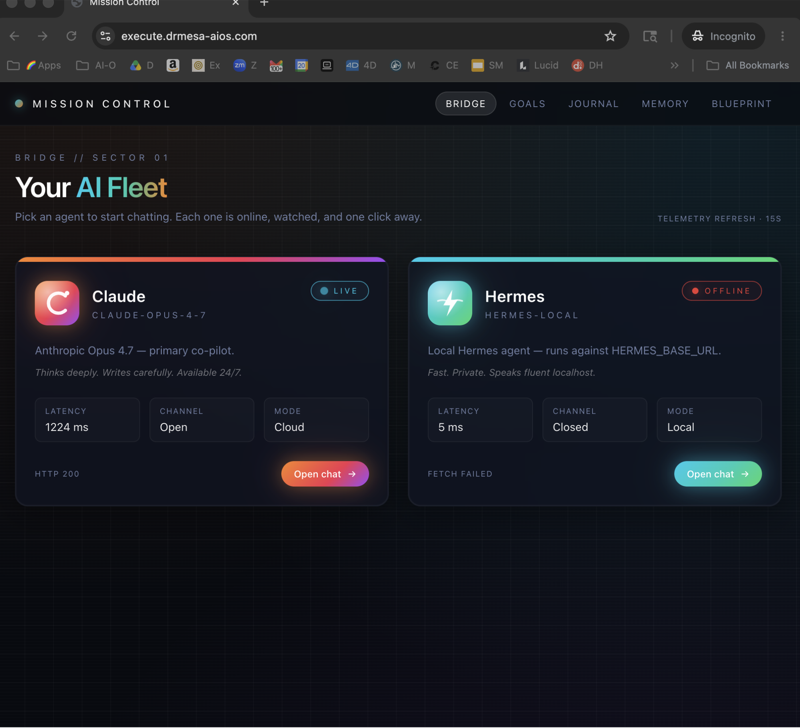

Wiring Into Hermes

If you're running Hermes as your agent orchestrator:

- Open Hermes

- Go to tools → reconfigure → vision

- Paste your OpenAI API key

- Save

Now any Hermes agent with a vision tool can generate images on demand.

I broke down the broader Hermes vision setup in my Hermes AI Video Generator post — the auth pattern is identical.

Wiring Into OpenClaw 4.21

OpenClaw 4.21 has built-in support for gpt-image-2:

- Open OpenClaw

- Go to integrations

- Paste the OpenAI docs URL + your API key

- Enable vision

I covered the full OpenClaw setup in my OpenClaw 4.20 update post — the 4.21 flow is nearly identical with extra image routing.

ChatGPT Image 2 Tutorial Prompt Workflow

Here's the workflow I run for every image my automations generate:

Stage 1 — Idea

A human (or another agent) writes a 1-line brief.

"Hero image for a Friday newsletter about AI agents."

Stage 2 — Expansion

Claude Sonnet 4.6 takes the brief and expands it into a structured 300-word image prompt.

Composition. Style. Lighting. Mood. Colour palette. Subject. Camera angle.

Stage 3 — Generation

The structured prompt goes to gpt-image-2 via API.

Thinking mode enabled.

Stage 4 — QA

Another Claude agent reviews the image against the brief.

If it's off, it rewrites the prompt and regenerates.

Stage 5 — Ship

Image goes to wherever it needs to go — newsletter, blog, social.

Full loop. Zero human in the middle once the brief is written.

The Six Tests That Convinced Me

I ran these manually before wiring them into automation:

Movie poster — "The Last Noodle"

Tagline legible, composition solid, beat Gemini cleanly.

8-panel goldfish comic

Richer colours, dialogue actually readable.

Goldie Agency logo

Close call — both models were decent. Slight edge to Image 2.

Fantasy world map

Hyper-detailed, labels legible, coastlines coherent. No other model comes close.

Dog LinkedIn profile

Funny + realistic — the model auto-detected the LinkedIn aesthetic.

Book mockup in a cafe

Uploaded the cover, got perfect lighting + perspective matching. Ready to ship.

Codex 2.0 + ChatGPT Image 2 = Design Team In A Box

For any automation team shipping marketing pages:

- Codex 2.0 handles UI mockups

- ChatGPT Image 2 handles hero images and marketing creative

- Claude Sonnet 4.6 handles copy

- OpenClaw / Hermes orchestrates the whole pipeline

I've been shipping landing pages end-to-end with this stack and the quality beats what I used to pay a design agency £2k a month for.

The Codex mockups are often better than the final built pages — which tells you something.

Video notes + links to the tools 👉 https://www.skool.com/ai-profit-lab-7462/about

Aspect Ratios + Specs

- Aspect picker: top right in ChatGPT

- Supports: square, landscape, story (9:16), ultra-wide

- Generation time: ~43 seconds

- Edit mode: yes (mask + prompt)

- Intent detection: yes (generate vs edit from prompt wording)

- Pricing: see platform.openai.com — competitive with GPT Image 1.5

ChatGPT Image 2 Tutorial — Mistakes To Avoid

- Don't write prompts in code — use Claude Sonnet 4.6 as a prompt expander

- Don't skip QA — add a review agent downstream

- Don't hard-code aspect ratios — let the pipeline pass them in

- Don't regenerate from scratch — use edit mode with masks for variants

- Don't forget to cache — API costs add up when you're shipping hundreds of images

Automation playbooks live inside the Boardroom Every automation stack I run — Hermes, OpenClaw, ChatGPT Image 2, Codex — is documented inside the AI Profit Boardroom with step-by-step videos and n8n/Make templates. Weekly coaching calls where you can show me your stack and I'll help you debug. → Get the full automation library

Related Reading

Learn how I make these videos 👉 https://aiprofitboardroom.com/

FAQ

How do I call ChatGPT Image 2 from the API?

Model ID: gpt-image-2. Endpoint: images.generate. Pass your prompt + aspect ratio params.

Does Hermes support ChatGPT Image 2 out of the box?

Yes — tools → reconfigure → vision, paste your OpenAI key, done.

Does OpenClaw 4.21 support ChatGPT Image 2?

Yes. Paste docs URL + API key in integrations. Full routing support.

Can I mask-edit images via the API?

Yes. Upload an image and pass a mask image along with the prompt. Works identically to the ChatGPT UI.

How long does API generation take?

~43 seconds per image in my tests, similar to the ChatGPT app.

What prompt format works best for gpt-image-2?

Structured prompts with composition, style, lighting, mood, subject, and palette sections. Use Claude Sonnet 4.6 as a prompt expander.

Can I batch-generate images via the API?

Yes — loop API calls or parallelise with asyncio. Cache results to avoid re-generations.

Get a FREE AI Course + Community + 1,000 AI Agents 👉 https://www.skool.com/ai-seo-with-julian-goldie-1553/about

Automate the boring bits, keep the creative brief in your head, and let this chatgpt image 2 tutorial workflow do the rest.