Every deepseek v4 tutorial I've seen so far has been either breathless hype or academic gibberish — so I'm writing the one I wish I'd found when I started testing this thing.

If you build AI agents, cost compounds fast.

Run 10,000 calls a day on GPT and you'll notice.

DeepSeek V4 is the answer — maybe.

Let me show you what happened when I actually tested it.

Video notes + links to the tools 👉

Why This DeepSeek V4 Tutorial Matters For Agents

Agents hit APIs thousands of times.

Every token costs money.

DeepSeek V4 is engineered for efficiency — and that translates directly into cheaper bills.

The Efficiency Numbers

- V4 Pro uses just 27% of the compute of V3.2

- V4 Pro uses 10% of the KV cache memory of V3.2

- V4 Flash uses 10% compute and 7% memory

Translation: you can run more agents for less money, on the same hardware.

DeepSeek V4 Pro vs V4 Flash for Agents

Quick breakdown.

V4 Pro

- 1.6T total, 49B active

- Use for your smartest agent (planner, orchestrator)

- MoE architecture

- 1M context

V4 Flash

- 284B total, 13B active

- Use for worker agents (writers, scrapers, categorisers)

- Cheaper per call

- Also 1M context

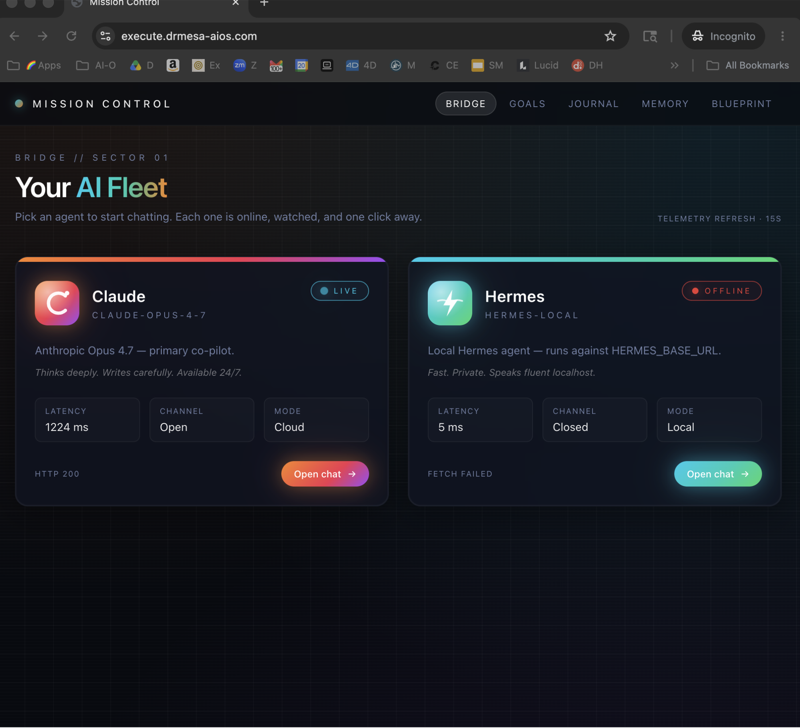

My Agent Stack

I use both.

- Planner → V4 Pro with Deep Think

- Workers → V4 Flash with non-think mode

- Quality check → Claude Opus 4.6 (because polish matters)

This pattern works beautifully alongside the Kimi K2.6 agent swarm approach.

The Three Reasoning Modes (API Edition)

Platform.deepseek.com gives you three modes:

Non-Think

- Fast, no reasoning chain

- Use for worker agents doing simple tasks

- Cheapest per call

Think High

- Step-by-step reasoning

- Use for decisions that need logic

- Moderate cost

Think Max

- Up to 384K reasoning tokens on a single problem

- Use for complex planning or hard debugging

- Most expensive but still cheaper than Claude reasoning

Pick based on task difficulty.

Don't pay for Think Max on a sentiment classifier.

DeepSeek V4 Tutorial — Agent Setup Step By Step

Proper walkthrough, no fluff.

Step 1: Get API Access

- Go to platform.deepseek.com

- Sign up

- Add billing

- Copy your API key

Step 2: Check the Endpoint

Important: the old deepseek-chat and deepseek-reasoner endpoints retire after July 24.

Use the new V4 endpoints from day one.

Step 3: Build Your First Agent

Structure:

System prompt → DeepSeek V4 Pro (Think High) → JSON output

↓

DeepSeek V4 Flash workers (non-think) execute subtasks

↓

Claude Opus polishes final output if user-facing

Step 4: Monitor Costs

Watch the token counts.

Flash will be 3-5x cheaper than Pro per call.

🔥 Want my full DeepSeek V4 agent stack? Inside the AI Profit Boardroom, I've got a complete cheap-inference agents section showing you my DeepSeek V4 + Kimi K2.6 + Claude routing setup. Exact system prompts, cost breakdowns, n8n blueprints. 3,000+ members are building with this. Weekly live coaching calls where I debug your agents on screen. → Get the full training here

The Live Test — Does It Actually Hold Up?

I didn't just read the spec sheet.

I tested it.

Test 1: Pong Game in Deep Think Mode

Ran the classic "build Pong" prompt with Deep Think enabled.

Result: worked but paddle was laggy, generation was slow.

Reasoning chain was long and thoughtful though.

For a one-shot agent call, it did the job.

Test 2: Landing Page in Instant Mode

Prompt: "Build a landing page for an AI SaaS."

Output: clean HTML, but the design felt dated.

V3-era vibes.

For user-facing UI, I'd route to Claude Opus — but for agents parsing structured data, V4 is fine.

Benchmarks That Matter for Agents

Simple QA Verified

- DeepSeek V4: 57.9

- Claude Opus 4.6 Max: 46.2

- GPT 5.4 high: 45.3

For factual agents (research, lookups), DeepSeek actually wins.

Codeforces

- 93.5% solve rate, 23rd vs humans

For coding agents? DeepSeek is legitimately strong.

MMLU Pro

- V4 Pro: 87.5

- V4 Flash: 86.2

Pro and Flash are close — which means Flash is a bargain for most tasks.

Architecture in 60 Seconds

You don't need to understand the maths.

But you should know why V4 is cheap.

Compressed Sparse Attention

4 tokens get squeezed into 1.

Less memory per request.

Heavily Compressed Attention

128 tokens squeezed to 1 on deeper layers.

1M context becomes actually affordable.

Manifold Constrained Hyperconnections

Layers talk to each other 4x more.

Better reasoning, same parameter count.

Muon Optimizer

Not AdamW.

Faster training convergence = they can iterate more per dollar.

Trained on 32T Tokens

With context length grown progressively: 4K → 16K → 64K → 1M.

Running DeepSeek V4 Locally

Skip the API bill entirely.

LM Studio Setup

- Install LM Studio

- Search "DeepSeek V4 Flash"

- Download a quantised version (4-bit GGUF fits on 24GB VRAM)

- Load it, fire requests via localhost

Hugging Face Setup

- Pull weights from

deepseek-ai/DeepSeek-V4-Flash - Use vLLM or TGI for serving

- Run as drop-in OpenAI-compatible endpoint

Pairs nicely with Ollama and Hermes if you're already running a local model stack.

DeepSeek V4 Tutorial — When NOT To Use It

Honest assessment.

- User-facing UI generation → Claude Opus wins

- Creative writing and copy → GPT 5.5 wins

- Fast web search agents → GPT still has the edge

- Multimodal (image, audio) → not a strength yet

For everything else, DeepSeek V4 is genuinely competitive and much cheaper.

FAQ

How do I use DeepSeek V4 for agents?

Sign up at platform.deepseek.com, get an API key, use the new V4 endpoints with the reasoning mode that matches task difficulty.

Route simple tasks to non-think mode for lowest cost.

Is DeepSeek V4 really cheaper than GPT 5.5?

Yes, significantly.

Per-token prices are a fraction of GPT 5.5, and efficiency means fewer compute cycles per response.

Can I run DeepSeek V4 agents locally?

Yes — V4 Flash runs on consumer GPUs via LM Studio or vLLM.

V4 Pro needs enterprise hardware.

What's the context window on DeepSeek V4?

1 million tokens on both Pro and Flash.

Enabled by compressed sparse attention and heavily compressed attention layers.

What's Deep Think mode used for?

Hard reasoning problems — maths, complex code, multi-step planning.

Uses up to 384K thinking tokens before producing an answer.

When do old DeepSeek APIs shut off?

deepseek-chat and deepseek-reasoner retire after July 24.

Move to V4 endpoints now.

Related Reading

- Kimi K2.6 agent swarms — another open model, perfect pairing with DeepSeek for worker roles

- GPT 5.5 Pro — the competition from OpenAI

- Ollama + Hermes — if you want a full local LLM stack alongside DeepSeek

⚡ Ready to cut your AI agent bill by 70%? Inside the AI Profit Boardroom, I've got the exact DeepSeek V4 agent stack, the cost routing logic, and the n8n automations I use. 3,000+ members, weekly coaching, and a full course library on cheap-inference agents. → Join the Boardroom here

Learn how I make these videos 👉

Get a FREE AI Course + Community + 1,000 AI Agents 👉

Final Thoughts

If you're building agents and haven't looked at open-source models this year, this deepseek v4 tutorial is your sign to stop overpaying and start shipping cheaper, faster, smarter workflows.