DeepSeek V4 Ollama is the engine I now plug into five different harnesses, all running in parallel terminal tabs, all completely free.

Let me show you the full stack and how each piece earns its keep.

Here's the secret nobody tells you about agent stacks.

The model is replaceable. The harness is the moat.

I've been saying this for months but DeepSeek V4 Ollama proves it in real time — one model, five different harnesses, five completely different superpowers.

Why DeepSeek V4 Ollama is the Right Brain for the Job

Before I get into the harnesses, quick word on why DeepSeek V4 Flash through Ollama is the model worth wiring up.

- Free tier — generous limits, no credit card

- Cloud-hosted — no local hardware needed

- Frontier-class reasoning — genuinely competes with the paid Tier-1 models

- Standard Ollama interface — every harness that speaks Ollama works instantly

That last one is the killer. Because Ollama is the de facto local AI standard, anything that supports "local model via Ollama endpoint" now also supports DeepSeek V4 Flash.

Setting It Up

Two commands.

curl -fsSL https://ollama.com/install.sh | sh

ollama run deepseek-v4-flash

That's the whole install. Cloud model, no download.

If you've never run Ollama before, my walkthrough on Ollama + Hermes covers the basics — same install, different model.

Now to the fun part. Cmd+T five times in your terminal app. We're going parallel.

Harness 1: Claude Code + DeepSeek V4 Ollama

Claude Code's planning loop is the best in the business — it breaks down tasks, holds context, retries failed steps.

Point it at DeepSeek V4 through Ollama and you get Claude's harness with DeepSeek's brain.

I've used this for refactoring sessions where I'd normally burn £15 in API costs. DeepSeek V4 Ollama version: free.

If you want the harness primer first, Claude Code free setup walks through the install.

Harness 2: Codex + DeepSeek V4 Ollama

OpenAI's Codex CLI — yes, you can point it at non-OpenAI models. That's the trick.

I asked Codex+DeepSeek to build a ping pong game.

Five minutes. Working browser game. Done.

For pure "build me this code" tasks, this combo punches well above its weight class.

Harness 3: Open Code + DeepSeek V4 Ollama

Open Code is the underrated one.

Quietly built me a full blog post page locally — HTML, CSS, the lot — while I was making coffee.

It's less famous than Claude Code or Codex but it's faster for one-shot page builds. Worth a slot in your tabs.

Harness 4: OpenClaw + DeepSeek V4 Ollama

This is where DeepSeek V4 Ollama gets agentic.

OpenClaw runs as a local web UI on a gateway port. Once connected to your Ollama endpoint, it can drive your browser.

I told it "go speak to ChatGPT" — it opened ChatGPT in my browser instantly and started typing a query.

Live browser control, model-driven, free.

If you've been following the OpenClaw with Kimi K2.6 breakdown, the DeepSeek V4 swap is even smoother — V4 Flash responds faster on the browser-action loop.

🔥 Want to see the OpenClaw + DeepSeek V4 setup live? Inside the AI Profit Boardroom I've got step-by-step videos showing the OpenClaw config, the gateway setup, and the exact prompts I use for browser automation. Plus weekly calls where I'll look at YOUR setup. 3,000+ members already inside. → Get access here

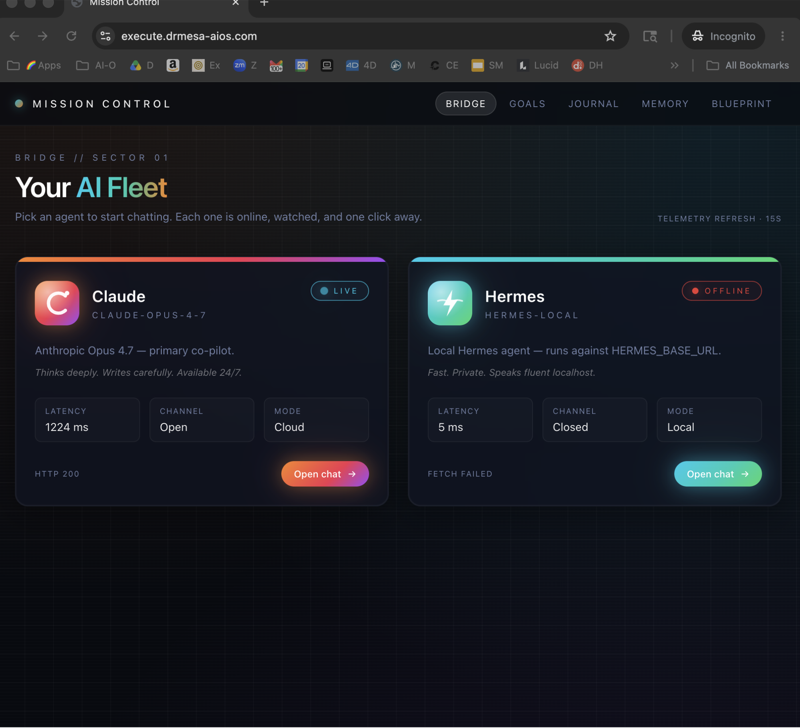

Harness 5: Hermes + DeepSeek V4 Ollama

Hermes is the harness for scheduled work — set it once, it runs forever.

I have a Hermes agent running daily that:

- Researches latest AI automation news

- Summarises the top five stories

- Pings me a Slack message at 9am

Setup time: 90 seconds.

Cost: zero.

This was the harness I was most sceptical of — scheduled agents have historically been janky. With DeepSeek V4 Ollama as the brain, they're rock solid.

The pairing is much smoother than what I documented in Hermes with Gemma 4 — V4 Flash is just a stronger reasoning brain for the scheduled-decision flows.

What Happens When You Run All Five at Once

Here's what my screen looks like at 10am on a workday:

- Tab 1 — Claude Code refactoring a project

- Tab 2 — Codex building a small utility

- Tab 3 — Open Code shipping a landing page

- Tab 4 — OpenClaw scraping competitor pricing

- Tab 5 — Hermes running daily research

- Tab 6 — Raw Ollama chat for my one-off questions

All powered by the same DeepSeek V4 Ollama install.

Five different jobs. One brain. Zero bills.

The Insight That Changes Everything

This is the bit most people miss.

DeepSeek V4 raw on chat.deepseek.com is fine — it's a chat interface with a smart model.

But it can't browse, can't schedule, can't run tools, can't ship code on its own.

Wrap that exact same model in OpenClaw and it browses.

Wrap it in Hermes and it schedules.

Wrap it in Codex and it ships code.

The harness controls the API. The model is just the brain inside whichever body you pick.

DeepSeek V4 Ollama gives you the brain for free. The harnesses are also free. So your only constraint is which body you want to give it today.

My Honest Take After Two Weeks

I've replaced about £180/month of paid AI subscriptions with this stack.

Quality is genuinely on par. Sometimes better.

The only thing I still pay for is one premium model for client deliverables where I want absolute top-tier output. Everything else — daily code, daily research, daily browser tasks — runs on DeepSeek V4 Ollama through one of these harnesses.

If you're spending £100+/month on AI tools and not running this stack, you're leaving money on the table.

Want my full free-stack playbook? Inside the AI Profit Boardroom I've documented the exact configs for all five harnesses pointing at DeepSeek V4 Ollama, plus the prompts I use for each one. Step-by-step videos, weekly coaching, 3,000+ members. → Join here

Related reading

- DeepSeek V4 Tutorial — the model deep-dive

- OpenClaw with Kimi K2.6 — same harness, different model comparison

- Hermes Agent Mission Control — the scheduled-agent control plane

FAQ

Can DeepSeek V4 Ollama really power five harnesses simultaneously?

Yes — and the free tier handles it well within reasonable use. Each harness is just making API calls to your local Ollama endpoint, which forwards to the cloud. The bottleneck is rate limits, not your machine.

Which harness should I start with for DeepSeek V4 Ollama?

Start with Claude Code if you're doing coding work. Start with Hermes if you want scheduled agents. Start with OpenClaw if you want browser automation. Pick the one that matches your immediate pain.

Is the DeepSeek V4 Ollama free tier really enough for daily use?

For most solo operators, yes. I've been using it heavily for two weeks and haven't hit limits in normal workflows. If you're pushing thousands of requests per day, you'll want to budget for the paid tier.

Why does DeepSeek V4 think in Chinese before answering?

It's the model's internal reasoning chain leaking through — DeepSeek is Chinese-developed and the chain-of-thought tokens often surface in Chinese before the final English response. Doesn't affect quality.

Can I mix DeepSeek V4 Ollama with paid models in the same harness?

Yes. Most harnesses let you switch models per session. I'll often use DeepSeek V4 for the bulk of work and switch to a paid model for the final review pass.

Is DeepSeek V4 Ollama better than running a local model?

For most people, yes. No hardware requirements, no download, frontier-class output. Local models still win if you need offline use or strict data privacy — but for everyday work, V4 Flash on Ollama is the easier path.

Get a FREE AI Course + Community + 1,000 AI Agents 👉 Join here

Video notes + links to the tools 👉 Boardroom

Learn how I make these videos 👉 aiprofitboardroom.com

DeepSeek V4 Ollama is the brain that makes the entire free agent stack viable — wire it into the harnesses above and you've got the most capable zero-cost AI setup available right now.